We live in a world of self-generating beliefs which remain largely untested.

We adopt those beliefs because they are based on conclusions, which are inferred from what we observe, plus our past experience.

Our ability to achieve the results we truly desire is eroded by our feelings that:

- Our beliefs are the truth.

- The truth is obvious.

- Our beliefs are based on real data

- The data we select are the real data

For example:

I am standing before the executive team, making a presentation. They all seem engaged and alert, except for Larry, at the end of the table, who seems bored out of his mind. He turns his dark, morose eyes away from me and puts his hand to his mouth. He doesn’t ask any questions until I’m almost done, when he breaks in: “I think we should ask for a full report.”

In this culture, that typically means, “Let’s move on.”

Everyone starts to shuffle their papers and put their notes away. Larry obviously thinks that I’m incompetent — which is a shame, because these ideas are exactly what his department needs. Now that I think of it, he’s never liked my ideas. Clearly, Larry is a power-hungry jerk.

By the time I’ve returned to my seat, I’ve made a decision: I’m not going to include anything in my report that Larry can use. He wouldn’t read it, or, worse still, he’d just use it against me. It’s too bad I have an enemy who’s so prominent in the company.

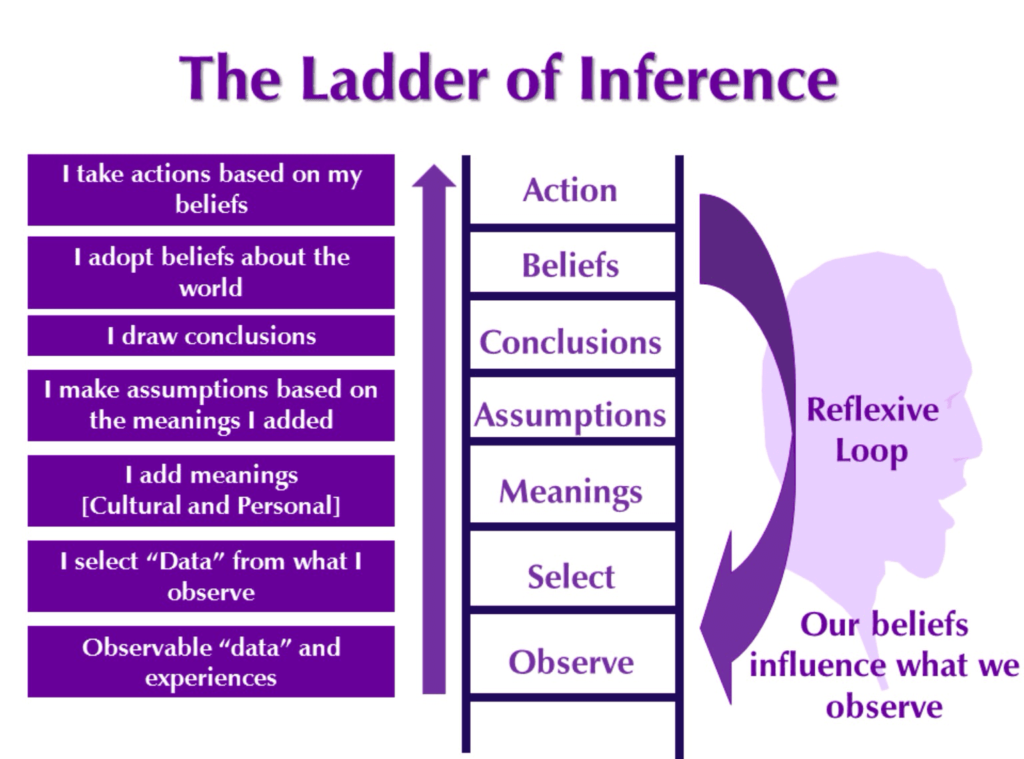

In those few seconds before I take my seat, I have climbed up what Chris Argyris calls a “ladder of inference”, a common mental pathway of increasing abstraction, often leading to misguided beliefs:

In those few seconds before I take my seat, I have climbed up what Chris Argyris calls a “ladder of inference”, a common mental pathway of increasing abstraction, often leading to misguided beliefs:

-

- I started with the observable data: Larry’s comment, which is so self-evident that it would show up on a videotape recorder …

- … I selected some details about Larry’s behaviour: his glance away from me and apparent yawn (I didn’t notice him listening intently one moment before) .

- … I added some meanings of my own, based on the culture around me (that Larry wanted me to finish up) ..

- … I moved rapidly up to assumptions about Larry’s current state (he’s bored) ..

- … and I concluded that Larry, in general, thinks I’m incompetent. In fact, I now believe that Larry (and probably everyone whom I associate with Larry) is dangerously opposed to me ..

- … thus, as I reach the top of the ladder, I’m plotting against him.

It all seems so reasonable, and it happens so quickly, that I’m not even aware I’ve done it.

Moreover, all the rungs of the ladder take place in my head. The only parts visible to anyone else are the directly observable data at the bottom, and my own decision to take action at the top. The rest of the trip, the ladder where I spend most of my time, is unseen, unquestioned, not considered fit for discussion, and enormously abstract. (These leaps up the ladder are sometimes called “leaps of abstraction.”)

I’ve probably leaped up that ladder of inference many times before.

The more I believe that Larry is an evil guy, the more I reinforce my tendency to notice his malevolent behaviour in the future. This phenomenon is known as the “reflexive loop”: our beliefs influence what data we select next time. And there is a counterpart reflexive loop in Larry’s mind: as he reacts to my strangely antagonistic behaviour, he’s probably jumping up some rungs on his own ladder. For no apparent reason, before too long, we could find ourselves becoming bitter enemies.

Larry might indeed have been bored by my presentation, or he might have been eager to read the report on paper. He might think I’m incompetent, he might be shy, or he might be afraid to embarrass me. More likely than not, he has inferred that I think he’s incompetent.

We can’t know, until we find a way to check our conclusions.

Unfortunately, assumptions and conclusions are particularly difficult to test. For instance, suppose I wanted to find out if Larry really thought I was incompetent. I would have to pull him aside and ask him, “Larry, do you think I’m an idiot?” Even if I could find a way to phrase the question, how could I believe the answer? Would I answer him honestly? No, I’d tell him I thought he was a terrific colleague, while privately thinking worse of him for asking me.

Now imagine me, Larry and three others in a senior management team, with our untested assumptions and beliefs. When we meet to deal with a concrete problem, the air is filled with misunderstandings, communication breakdowns, and feeble compromises. Thus, while our individual IQs average 140, our team has a collective IQ of 85.

The ladder of inference explains why most people don’t usually remember where their deepest attitudes came from. The data is long since lost to memory, after years of inferential leaps. Sometimes I find myself arguing that “The Republicans are so-and-so,” and someone asks me why I believe that. My immediate, intuitive answer is, “I don’t know. But I’ve believed it for years.” In the meantime, other people are saying, “The Democrats are so-and-so,” and they can’t tell you why, either. Instead, they may dredge up an old platitude which once was an assumption. Before long, we come to think of our longstanding assumptions as data (“Well, I know the Republicans are such-and-such because they’re soand-so”), but we’re several steps removed from the data.

Using the Ladder of Inference

You can’t live your life without adding meaning or drawing conclusions. It would be an inefficient, tedious way to live.

But you can improve your communications through reflection, and by using the ladder of inference in three ways:

- Making your thinking and reasoning more visible to others (advocacy);

- Becoming more aware of your own thinking and reasoning (reflection);

- Inquiring into others’ thinking and reasoning (inquiry).

Once Larry and I understand the concepts behind the “ladder of inference,” we have a safe way to stop a conversation in its tracks and ask several questions:

- What is the observable data behind that statement?

- Does everyone agree on what the data is?

- Can you run me through your reasoning?

- How did we get from that data to these abstract assumptions?

- When you said “[your inference],” did you mean “[my interpretation of it]”?

I can ask for data in an open-ended way: “Larry, what was your reaction to this presentation?”

I can test my assumptions: “Larry, are you bored?”

Or I can simply test the observable data: “You’ve been quiet, Larry.” To which he might reply: “Yeah, I’m taking notes; I love this stuff.”

Note that I don’t say, “Larry, I think you’ve moved way up the ladder of inference. Here’s what you need to do to get down.” The point of this method is not to nail Larry (or even to diagnose Larry), but to make our thinking processes visible, to see what the differences are in our perceptions and what we have in common. (You might say, “I notice I’m moving up the ladder of inference, and maybe we all are. What’s the data here?”)

This type of conversation is not easy. For example, as Chris Argyris cautions people, when a fact seems especially self-evident, be careful. If your manner suggests that it must be equally self-evident to everyone else, you may cut off the chance to test it. A fact, no matter how obvious it seems, isn’t really substantiated until it’s verified independently – by more than one person’s observation, or by a technological record (a tape recording or photograph).

Embedded into team practice, the ladder becomes a very healthy tool. There’s something exhilarating about showing other people the links of your reasoning. They may or may not agree with you, but they can see how you got there. And you’re often surprised yourself to see how you got there, once you trace out the links.

Rick Ross

Excerpt from The Fifth Discipline Fieldbook. Copyright 1994 by Peter M. Senge, Art Kleiner, Charlotte Roberts, Richard B. Ross, and Bryan J. Smith. Reprinted with permission.

Read about the unconscious mind, emotional intelligence, stress, happiness at work, meaningfulness and trust